8800GTS REVIEW BENCHMARK AND OVERCLOCK

![]()

|

|

|

||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||

| Posted:2007-01-02 By video card review Number of View:121029 |

|||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||

By :video card review Posted:2007-01-02

1. IntroductionThis article is dedicated to test two video card geForce 8800 GTS on the new graphic chip g80. If you plan to purchase such video card, but for any reasons until now you use processor athlon 64 X2 , then possibly it will be interesting, how lower performance will show the video card in benchmark and games with the use of platform on the base of processor AMD. if your motherboard has a support to technology SLI, then you can look to increase the performance from the installation of a second video card geForce 8800 GTS. in addition to the study of the sufficiency (or insufficiency) of speed for platform AMD athlon X2, will be described the special features of alternative cooling installation on video card geForce 8800 GTS/GTX, about the overclock of the frequencies unit geometry and shader block GPU g80 and about the voltmod video card Of geForce 8800 GTS. So in this article are given the temperature conditions testing results with the use of both air and water cooling. 2. Fundamental technical characteristics geForce 8800 GTX and GeForce 8800 GTS

3. Review chaintech GAE88GTS (GeForce 8800 GTS)This video card do not differ from other GeForce 8800 GTS, although it does not use alternative cooling and does not have high frequencies. Video card is supplied in the box with the design of the old type – large and without the transparent plastic window. But video card is also big and normally is fixed inside the box.   Assembly:

Here is how look the video card with installed cooling system:

Cooling system is aluminum radiator with the large copper the copper edges and thermal tube. It sufficiently effectiveand quiet. The radiator through the thermo-padding make contacts with all memory chips, elements of power-supply system and chip NVIO. Because of the large sizes the cooling system cover almost entire surface of video card and overlaps adjacent slot on the motherboard. As the thermo-interface is used a large quantity of gray thermo-paste, which did not dry completely. Before testing video card overclock and temperature conditions I replaced this thermo-paste by Geil.   With the first start of this cooling system we had the problem with the wire for power connection witch was rolled up downward into the loop and blocked the located directly under it chipset fan cooler of the motherboard DFI lanParty UT nF 4- of D. chipset fan on this motherboard is noisy (especially if we consider that the voltage on the chipset was increased). Design PCB in Chaintech GAE88GTS is the same as in other GeForce 8800 GTS, released at the given moment, i.e., reference. The collection of interfaces is standard: two DVI-out, the hDTV-out, connector for the SLI- bridge (interface MIO) and connector for the additional power . Video card has the built-in speaker and rejects to start without the connection of additional power.

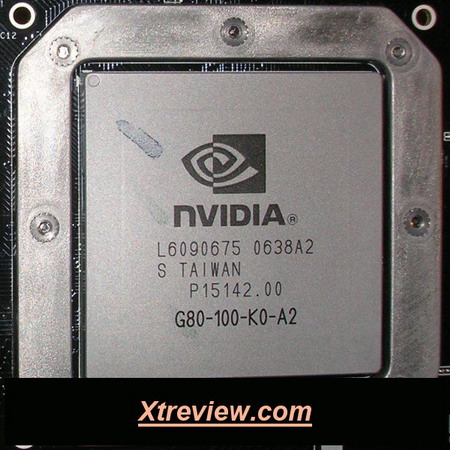

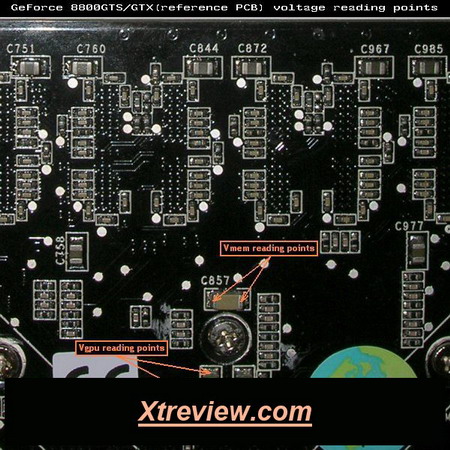

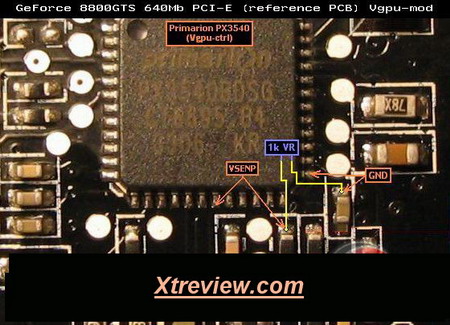

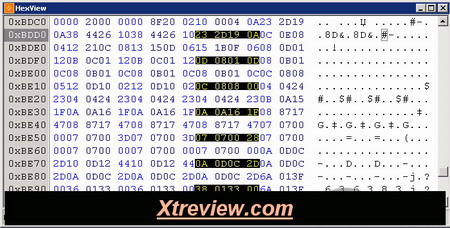

On the card is installed the GPU g80 revision A2, released in 38 week of 2006. It has very large sizes and is closed on top with heat-distributer, which protects it from the possible chippings. All openings around GPU have the small diameter , which sets the specific limitations on the use of water cooling systems and forces to acquire water-blocks developed specially for video card geForce 8800 GTS/GTX . Accurately the same problem already appeared earlier from video cards GeForce 7800 GTX.  Video card is equipped with 640 MB. GDDR 3- memory, which are installed in the form of 10 microcircuits samsung K4J52324QE-BC12, which have speed of 512 Mbit and released on 40 week of 2006. All memory chips are located on the face side of the card. Datasheet for this memory on the site of producer is absent, but it is possible to determine on Part number decoder that the time of access is equal to 1.25-ns, and the operating frequency is 1600 MHz.  In the left side is located the chip NVIO revision A3, released in 41 week 2006:  it has support to interface functions (RAMDAC, Dual DVI, HDMI, HDTV). From one side, this is good, because this approach makes possible to introduce changes (improvement) without complex work in GPU g80 . But the use of a united radiator for all heating sections of card with the replacement of cooling system appears problem to cool chip NVIO. Associate recently showed that the cooler of type zalman VF900- Cu and Ice hammer IH-500V is even less effective than the default cooling system. Therefore remains only two versions of the finished processor cooler a GPU with the base which will completely cover entire surface of the heat-distributer g80, or water cooling. In the case of processor cooler, naturally we should install it and make the airflow directed downward and not upward . But in the case of water-block, it will usefully to install additional blowout fan on the video card . TO cool the chip NVIO on the video card I used a large radiator, which was prepared from old processor cooler ( Socket 370 ).  But for the second video card everything was made considerably simpler . using a thermo-paste was on top planted a small radiator zalman and fixed by wire:  Checking showed that both versions are completely sufficient to cool the chip NVIO. The first version, of course more reliable and better suitable for prolonged use, but as the temporary solution will be sufficient the second. 4. Voltmod GPU memory on reference video cards GeForce 8800 GTSTo measure the volatge on GPU is possible on the capacitor c850, and for the memory to C857. They are located on the reverse side of card on to center.  Alternative points to measure the volatge on GPU of capacitors c565, C566, C567, and on the memory c622, C647, C688. They are located not far from the microcircuits, which control the appropriate volatge. On default the volatge on memory is equal to 1.81V. On GPU it composes 1.27V in 2d regime, and it is reduced upon transfer into 3d and the higher is the load, the greater the reduction In vgpu. During the tests 3DMark it was 1.24, while during the ATITool it decreased to 1.23V. Generally, when a similar drop in the load voltage occurs on the motherboard (usually this concerns Vcore), that for the correction adapt special voltmod, called Vdroop. But, unfortunately, for video card geForce 8800 GTS/GTX there is no method known to remove this unpleasant special feature. Voltage GPU on video cards GeForce 8800 GTS (and on 8800 GTX) is controlled by the microcircuit primarion powerCode PX3540, located on the reverse side of card in the left lower angle. Datasheet of this model on the site of producer is absent, but there is possibility the download the datasheet of previous model PX3535 (PDF, 82Kb), which already adapted earlier to video cards GeForce 7800GTX-512 and GeForce 7900GTX. From the point view voltmod the difference between these two controllers is only in the different arrangement of power. Voltmod for video card geForce 7800GTX-512 and GeForce 7900GTX was performed by connecting 27- foot (VSENP) with the ground through a variable resistor. VSENP are located in Primarion powerCode PX3540 on 18- foot and its initial resistance with ground is equal to 32 Ohm. To increase the volatge on GPU it is necessary to connect this foot with ground through the variable resistor to 1k Ohm, preliminarily set to the maximum. After this, the vcore must increase approximately by 0.04V. But since the power of the controller microcircuit are too small and are inconvenient for soldering, then for the voltmod it is better to use the points, indicated on the fellowing picture:  Pencil voltmod GPU on these card, is impossible. Around PX3540 there is no smd- element (resistor or capacitor), which would be directly connected with VSENP and ground. Painting the resistors, connected with VSENP does not give anythings. Memory Voltmod As for the volatge controller on the memory is used the microcircuit intersil ISL6549 (datasheet, 437Kb), located from the reverse side in the left upper angle. For the voltmod of memory it is necessary to connect 4- foot ISL6549CB (Vmem-feedback) to ground using a variable resistor 20k Ohm. Pencil voltmod of the memory: for increasing the volatge on the memory is necessary to paint the resistor, noted on the picture as Pencil vmem-mod. Then verify the resistance obtained after painting according to this table:

Reverse voltmod of the memory: for reduction in the voltage on the memory it is necessary to connect 4- foot ISL6549CB (Vmem-feedback) with 13- 1 (Phase) through the variable resistor 20k Ohm or to paint by pencil the resistor, noted on the picture as Pencil reverse vmem-mod. Determination of optimum delta and latencies of memory 8800GTSIn the graphic chip g80 shader block (Shader domain) works at the considerably larger (approximately 2.3 times) frequency, than remaining blocks GPU. But there is no fixed delta between the frequencies as this was in GPU g7x. Instead of this in the BIOS of video card are separately the master frequencies of core and also the shader block. On default these frequencies compose 576 (1350) MHz in GeForce 8800 GTX and 513 (1188) MHz in GeForce 8800 GTS. With a change of the frequency core in the programs of overclock for video card the frequency of shader block automatically changes to the same side, but with the value approximately 2 times larger. Monitoring the current frequency of shader block is possible using Riva tuner v2.0 RC16.2 and also using Lavalys everest 2006 (beginning from the version v3.50.795 beta). But it is possible to calculate, what will come out the frequency of shader block after overclock, using the following formula: Since the frequency of shader block can change only with a step of 54, the obtained value must be rounded off to the nearest integer. But how to determine the optimum value for the frequency of shader block ? For the beginning it is necessary to find the limit of overclock without change in the BIOS frequencies . Then in order to determine, which of two frequencies holds your overclock, in BIOS we turn through by 54 high, then to 54 smaller frequencies of shader block. If after checking GPU overclock in each of these three versions, the best frequencies will be obtained with the frequency of shader block without change, it means it is not necessary to change . If version with the reduced or increased frequency proved to be better, it means it is possible to try to change the frequency of shader block further to the same side and with the same step (54) until optimum relationship is found. On both tested video cards Chaintech geForce 8800 GTS the limit of overclock GPU was reached without change in the frequency of shader block. After the replacement of cooling in both frequencies GPU increased by such value, which again has no need to change the frequency of shader block in BIOS. It is completely possible that everything can prove to be different on other copies geForce 8800 GTS/GTX, but spend time on such fine adjustment of both frequencies GPU stands only when you have a sufficiently powerful CPU. Otherwise you can simply not note any difference between the work of shader block in 1566 or 1620 MHz. The latencies of memory samsung 1.25-ns in video cards GeForce 8800 GTS are the following: The current latencies can be changed by the program niBiTor . The possibility to read from the bios or preserve BIOS modified latency in last version (3.1) of the program niBiTor is not provided. But nothing prevents from changing them by hand with the aid of any hexadecimal editor :-)  On one geForce 8800 GTS a change in the latency did not bring anythinks, but on other copies with the less successful overclock in the frequency memory, it is completely possible that reduction in the latency will give small addition in performance. The comparison of the memory latency in the BIOS from several reference GeForce 8800 GTS (Chaintech, Asus, BFG) and 8800 GTX (EVGA, BFG, MSI) showed that latency everywhere almost completely coincide, with exception of the fact that into GeForce 8800 GTS timing4 is equal to 28000707h, and in GTX – 00000707h. But this unessential difference and it do not have influences. Testing 8800GTS6.1. Test configuration and driversTesting was done at room temperature equal to +21°C. To test such powerful video card as GeForce 8800 GTS, in the configuration of test were substituted processor, the motherboard and power unit. Rapid check showed that in the majority benchmarks and games even the low-end model dual processor is more preferable than single processor, even with doubly large 2nd cache level and also to 100 MHz higher overclock. Moreover the owners of Althlon 64 X2 3800+ has much more than owners of Opteron 148. As the motherboard was DFI nF 4 so the possibility of stable work with memory from line +5V which allowed to overclock memory on chips winbond BH- SHCH without voltmod of line 3.3V in the power unit. The power unit golden power 450W did not manage even one non overclocked geForce 8800 GTS and instead of it was taken Chieftec 620W, whose power was sufficient even for work in regime SLI using two similar video card with overclock and voltmod. Configuration:

Operating system and drivers:

Tuning the driver NVIDIA forceWare:

6.2. Temperature conditions 8800GTSIn order to explain the temperature of graphic processor under load and idle , was started the module of monitoring program rivaTuner 2.0 RC16.2. we waited, until temperature is stabilized and was written the obtained temperature at idle. Then for 10 minutes started the program ATI tool 0.25 beta 16, after which maximum temperature was written. During the determination of temperature conditions in the system was installed only one video card. Temperature was determined in several regimes, which are characterized by frequencies (with overclock and without), by volatge and cooling type.

If we leave automatic control of fan rotation speed on then this result on 1380 RPM and in this case it is noisy,but quiet in comparaison with other (chipset cooler, power unit). Temperature in this case is not very high, but the reserve of overclock with the voltmod is low. Before the overclock I set the rotation fan to the maximum (2730 PRM) and were obtained reduction in the temperature GPU to 9°C under load and considerable increase in noise. But even in this regime cooler was not such loud as cooler on card radeon series x1800/x1900 (set to 100%). After overclock without the replacement of cooling, the temperature GPU under load increasedby 3 degree. Further on memory was made voltmod, cooling GPU was substituted to the water and to the blowout video card were installed two fans. As a result the temperature GPU was lowered to 9°C, but overclock remained as before. The following 612 MHz step of overclock GPU is equal to 648 and for the stable work at this frequency it was necessary to reduce temperature or to increase volatge on GPU. 6.3. Testing , benchmark and games performance 8800GTSThe following benchmarks and games were used for testing:

The setting did not change in all benchmarks, but in games were set the maximum quality. exception Only – the option soft shadows in F.E.A.R. In regime without AA and AF it was included on, and it was switched off with 4xAA and 16xAF –. This was made because of some trouble with game. During test of Company Of heroes, Need for speed: Carbon and Tom clancys rainbow six: Vegas regimes AA and AF were set by the driver forceWare. In the remaining games were used the built-in option . All tests Of geForce 8800 GTS (both on single video card and on two in regime SLI), were done in three regimes with different frequencies:

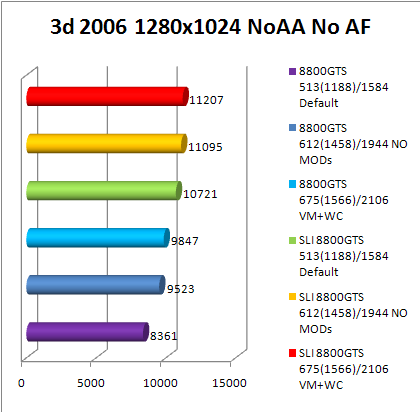

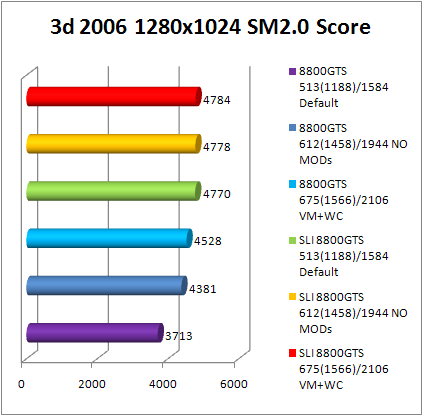

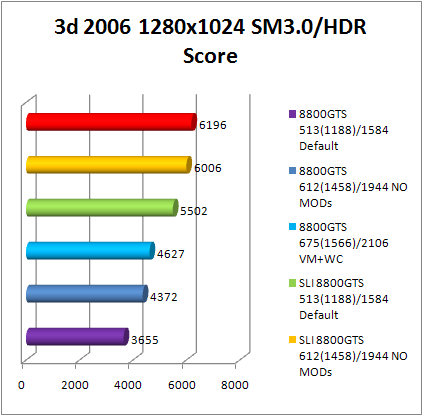

The general result in 3DMark06 sufficiently strongly influences the quantity of marks, obtained in the CPU- tests (CPU score), result in which generally does not depend on speed of video card. Athlon 64 X2 3800+ @ 2900 MHz CPU has score at level 2200 and this approximately two times more than shows single Athlon 64, which works at the same frequency, and 1.5 times less than overclocked average level Core 2 Duo. With this difference in CPU score, the difference in the general results can reach several thousand marks even when SM2.0 score and SM3.0/HDR score are not processor depondant. But in case of two video card geForce 8800 GTS regime SLI the speed of processor limits result even in the tests SM2.0. The more powerful is video card, the more strongly is manifested the speed of processor into 3DMark05. In this case the increase from overclock video card and regime SLI was only in Canyon flight, and the first two tests showed the identical results 54 fps in Return to proxycon and 43 fps in Firefly forest. 3DMark03 remains only benchmark, in which it is possible to obtain high results on powerful video cards and weak system. The first test Of wings Of fury to Athlon 64 X2 2900MHz issues not more than 500-520 fps, but it is possible to obtain approximately two times more with overclocked core 2 Duo. The speed of processor barely affects the result in the remaining three tests. 3DMark2001SE reacts to the frequencies of video card and the start SLI only in the last test nature. But even in it, in order to obtain more than 628 fps, it is necessary to increase the frequency of the processor higher than 2900MHz. the quantity of core in processor into 3DMark2001SE does not have value. In Aquamark3 the speed of processor it is insufficient not only in order to obtain increase from SLI, but also from overclock . The second core processor into Aquamark3 is used, but it gives not more than +8% to the result First encounter assault recon (F.E.A.R.) v1.0.8 In those regimes, the result is limited to processor, the minimum quantity of frames per second is located on the level of 50-60 fps and this it is completely sufficient for normal gaming. The performance of single video card is sufficient in 1024x768 and 1280x1024, and if its overclocked then 1600x1200. It is insufficient for higher regimes to use one 8800 GTS, but with two 8800 GTS in SLI it is possible to play in all regimes up to 2048x1536. Quake 4 v1.2.0.2386 In Quake 4 the possibilities of processor are located on the level of 110 fps and this is two times higher than minimum fps in F.E.A.R. But the threshold of came out by the same: 1280x1024 on one default card, 1600x1200 on overclocked and 2048x1536 only on SLI. Results in the resolution 1920x1440 are absent, because in this game we did not succeed to set it. Generally all resolutions higher than 1600x1200 was absent in the menu of game and 2048x1536 could be set only using parameter of the command line quake4.exe +set r_mode 9. Prey v1.2.116 It was explained during previous review that the performance do not at all increases in Prey version 1.0.103 with regime SLI, but it is even lower . This problem disappeared after the installation of patch and update to version 1.2.116. But two powerful video card in this game are not necessary, it is evident according to the results of testing that it is completely sufficient one 8800 GTS for the game in any regimes up to 1920x1200. Dual core processor AMD is also sufficient. This game uses engine Of quake 4; therefore with the installation of resolution higher than 1600x1200 we had the same problem. Game could not be tested in 1920x1440 and 2048x1536, but with the command line prey.exe +set r_mode 16 we have better result. Serious sam 2 v2.070 In order to load one or even two 8800 GTS in Serious sam 2 its not required to get faster processor than Athlon 64 X2 @ 2900 MHz. Already beginning from the resolution 1280x1024 the speed in the game is determined by video cards. On one 8800 GTS it is possible to play using 1280x1024 without overclock and in 1600x1200 if overclocked. With the use of regime SLI to play without brakes is possible with any resolution. Tom clancys splinter cell: Chaos theory v1.05.157 In Splinter cell chaos theory all can be done in single 8800 GTS, in contrast to SLI, does not pull the resolution higher than 1600x1200. There is no deficiency in the speed of processor. Company Of heroes v1.0.3 Setting in the game were used for the maximum quality:  With such setting the game is very demanding both on video card and to memory volume . single 8800 GTS here, as in many other games, is sufficient to the resolution 1600x1200 , in the of 1600x1200 it is desirable to overclock the video card . Effect from regime SLI exists, but in the case of Company Of heroes one 8800 GTX with 748 Mb video memory will be more preferable than SLI of two 8800 GTS, because in the resolution higher than 1600x1200 the use of video memory already exceeds 620 Mb. The speed of processor limits the minimum quantity of frames per second at the level of 55 fps. In this case the first core is loaded almost completely, and the second only half. Optimization under dual processors in this game leaves much to be desired. Half-Life 2: Lost coast In regime SLI video card were tested with drivers from versions v97.02 and v97.44, but results in all resolutions were obtained the same as with single video caed. Testing of two video card geForce 7900GS already showed earlier an increase from SLI in this game was possible, but in the case with 8800 GTS it does not exist. Most likely, problem in the driver forceWare and, possibly it will be corrected in one of the following versions. Even if we include mapping the balance of the load graphic processors, then the corresponding strip at the screen did not appear after the starting of game. In order to look load, was necessary in the control pannel driver to change option regime performance SLI from the value recommanded NVIDIA on custum. After this, it was obvious that driver actually work in regime SLI and the load of graphic processors is distributed equally, but repeated testing with this option showed that there is no increase from SLI . But in the case of Half-Life regime SLI is not necessary, testing one 8800 GTS showed that it is completely sufficient for the game at any resolutions. Processor limits the quantity of frame per second at the level higher than 100 this is sufficient. Need for speed: Carbon v1.2 To set resolution 1920x1440 and 2048x1536 was used the program NFSC custom resolution launcher v2. With the support of SLI in Need for speed carbon the situation is the same, as in the previous game from this series , obtained on the single card, do not differed from results in SLI. But in contrast to Most wanted, Carbon completely are used the possibilities of dual core processor. . Rainbow six: Vegas as the engine uses Unreal engine 3.0 so the load on video card and processor will be high, but the obtained results exceeded all my expectations: setting in the game were set as follows:  Option, which most strongly influences the speed in this game – shadow quality. the quality change of shadows from Very low to High led to a change of fps approximately 3.5 times! But even if we advance the minimum quality of shadows and by these to considerably reduce load on video card, then load on the central processor remains as before very high and reaches 97-98 percent on each core of the processor athlon 64 X2 3800+@2900 MHz. Measurement of performance occurred over the area at the beginning of the game:  On the given above screen shot it is possible to see 21.3 fps in the light duty of 1024x768 without AA and AF on single 8800 GTS, which works without overclock. This it is clearly insufficient for comfortable gaming. And here both core processor are loaded almost to 100%. If we increase resolution, then speed becomes less. So that in this game performance is limited to processor, and in video card. :  6.4. Maximum results in benchmarkTo obtain the maximum results of processor and video card they were overclocked to the maximum frequencies, at which they were capable to passe test. Processor and GPU both video card were cooled by water with the temperature near +10°C. operating system Windows XP SP2, BIOS motherboard and drivers were the lastest. Results on single geForce 8800 GTS:

Results on two GeForce 8800 GTS in regime SLI:

As you see, the processors athlon 64, even dual core do not make possible to obtain good results in the benchmark with the use of such rapid video card as GeForce 8800 GTS. More- less decent result is obtained only into 3DMark03. Time for replacing the test platform came. ConclusionChaintech GAE88GTS as all produced at the given moment card geForce 8800 GTS, has a reference design and a cooling system; therefore advantages enumerated below and deficiencies can be noted to all video cards of this series. Pluse:

Minuses:

So answer to the final question is A64 x2 enough for such video card ? In the majority of previously tested games the speed of this processor proved to be sufficiently for guaranteeing the minimum quantity of frame rate in the most suitable regimes for this video card (1280x1024 and 1600x1200 with 4x AA and 16 AF). As the exception it is possible to isolate two games, in which the application of a more rapid platform would bring benefit. This Company Of heroes, which sufficiently strongly loads processor. And Rainbow six vegas, which leaves impression as insufficient optimized product. Actual, why developers of games spend many months on the optimization, if on the market there is GeForce 8800 GTS/GTX and Intel core 2 Duo? so in the cuurent year such games will become more. However, as far as the use of two video card geForce 8800 GTS in regime SLI is concerned, here all simply – is better to take one GeForce 8800 GTX. Only six of nine tested games obtained addition from the start regime SLI and if we enlarge their list, then this relationship will remain approximately at the same level.

we would be happy to answer for your question . if you have suggestion or comment

regarding this review our support would be glad to help just join our forum and ask u will get the best answer

to discuss check our forum section :-) RATE THIS REVIEW | |||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||

![]()

7600gt review

7600gt is the middle card range.

We already benchmarked this video card and found that ...

geforce 8800gtx and 8800gts

geforce 8800gtx and 8800gts  Xtreview software download Section

Xtreview software download Section  AMD TURION 64 X2 REVIEW

AMD TURION 64 X2 REVIEW  INTEL PENTIUM D 920 , INTEL PENTIUM D 930

INTEL PENTIUM D 920 , INTEL PENTIUM D 930  6800XT REVIEW

6800XT REVIEW  computer hardware REVIEW

computer hardware REVIEW  INTEL CONROE CORE DUO 2 REVIEW VS AMD AM2

INTEL CONROE CORE DUO 2 REVIEW VS AMD AM2  INTEL PENTIUM D 805 INTEL D805

INTEL PENTIUM D 805 INTEL D805  Free desktop wallpaper

Free desktop wallpaper  online fighting game

online fighting game  Xtreview price comparison center

Xtreview price comparison center

- The new version of GPU-Z finally kills the belief in the miracle of Vega transformation

- The motherboard manufacturer confirms the characteristics of the processors Coffee Lake

- We are looking for copper coolers on NVIDIA Volta computing accelerators

- Unofficially about Intels plans to release 300-series chipset

- The Japanese representation of AMD offered monetary compensation to the first buyers of Ryzen Threadripper

- This year will not be released more than 45 million motherboards

- TSMC denies the presentation of charges from the antimonopoly authorities

- Radeon RX Vega 64 at frequencies 1802-1000 MHz updated the record GPUPI 1B

- AMD itself would like to believe that mobile processors Ryzen have already been released

- AMD Vega 20 will find application in accelerating computations

- Pre-orders for new iPhone start next week

- Radeon RX Vega 57, 58 and 59: the wonders of transformation

- ASML starts commercial delivery of EUV-scanners

- The older Skylake processors with a free multiplier are removed from production

- Meizu will release Android-smartphone based on Helio P40

- AMD Bristol Ridge processors are also available in American retail

- The fate of Toshiba Memory can be solved to the next environment

- duo GeForce GTX 1080 Ti in GPUPI 1B at frequencies of 2480-10320 MHz

- New Kentsfield overclocking record up to 5204 MHz

- Lenovo released Android-smartphone K8

computer news computer parts review Old Forum Downloads New Forum Login Join Articles terms Hardware blog Sitemap Get Freebies